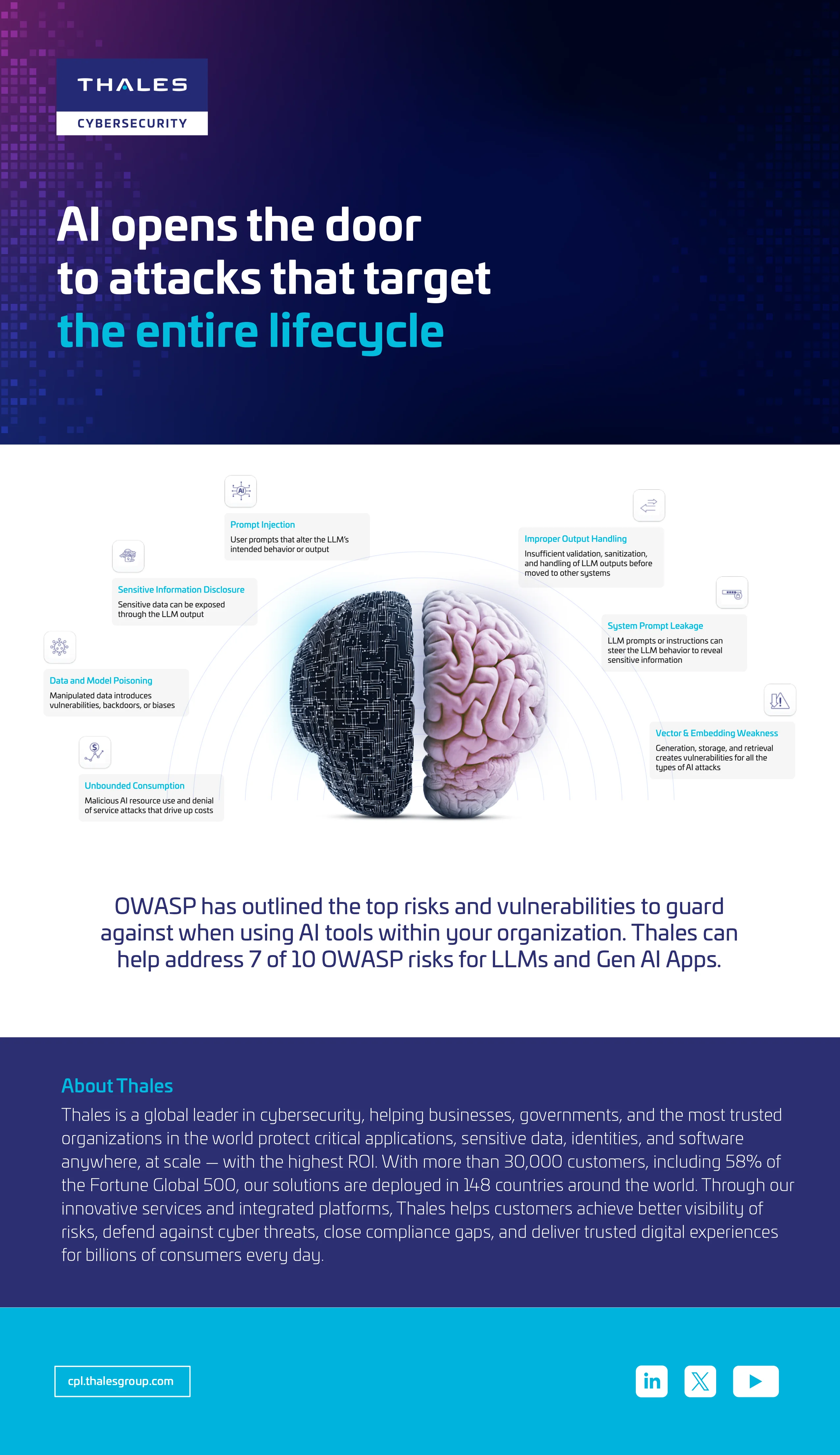

AI opens the door to attacks that target the entire lifecycle

As organizations rapidly adopt generative AI, new security challenges emerge that can expose sensitive data, introduce vulnerabilities, and drive unexpected costs. The OWASP has identified the most critical risks associated with large language models (LLMs) and AI applications. Thales AI Security Fabric tackles key threats, such as prompt injection, data leakage, and model poisoning, and shows how Thales can help you proactively address and mitigate them.

- Explore the top OWASP risks impacting LLM and GenAI deployments

- Learn how vulnerabilities like prompt injection and sensitive data exposure occur

- Discover practical strategies to strengthen your AI security posture