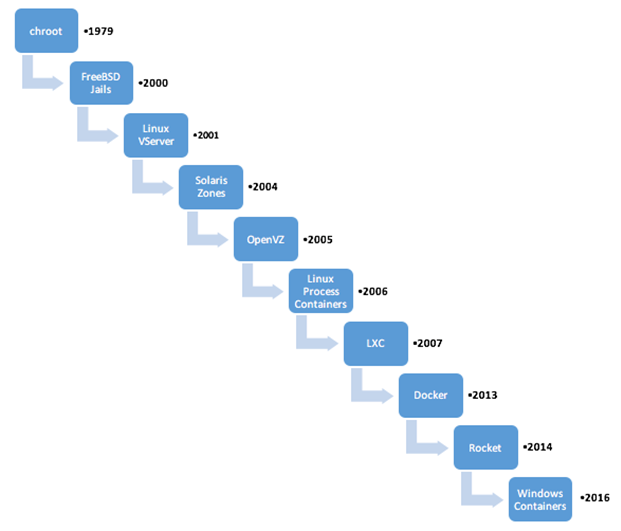

Containers have existed in some form or the other since Unix and FreeBSD days, for example, chroot, FreeBSD jails and Solaris Zones etc. The current generation of containers - LxC, LxD and Docker are extensions of these previous technology sets.

Containers have existed in some form or the other since Unix and FreeBSD days, for example, chroot, FreeBSD jails and Solaris Zones etc. The current generation of containers - LxC, LxD and Docker are extensions of these previous technology sets.

Click To Tweet: Blog: Data Security for Docker - Required bit.ly/26yIy8a pic.twitter.com/L7VBmjy5X6

What is Docker?

From Wikipedia: Docker is an open-source project that automates the deployment of applications inside software containers, by providing an additional layer of abstraction and automation of operating-system-level virtualization on Linux.

You can think of Docker as allowing the creation of isolated instances of applications that can run on a single system, even if those applications would normally require multiple OS instances. Developers package up applications with all of the elements required, such as libraries and other dependencies into a so called Docker image. Docker containers are running instances of Docker images. The Docker engine makes this possible through use of Linux kernel interfaces such as cgroups and namespaces, which allow multiple containers to share the same kernel while running in complete isolation from one another.

Conceptually then, Docker is a bit like a virtual machine, but docker containers are executed within the docker engine rather than within a hypervisor. In addition, every container runs only the designated application software, as opposed to an entire Virtual Machine (VM) environment, where each VM requires an entire operating system as well as the target application (with all the management and licensing costs associated with the VM). This makes Containers much smaller than VMs, enables lower costs, faster start up and improved performance. Hybrid approaches are also possible – containers can be run on bare metal as well as within a VM.

Docker containers run on all major Linux distributions and even within Microsoft operating systems (although within a Linux VM on the Windows host). Microsoft also plans to support two variants of containers directly:

- Server Containers: These are what one would consider a typical container running user mode applications on a native operating system.

- Hyper-V Containers: These are thin VMs, which allow for user mode applications to be executed within a virtual machine running a scaled down server kernel.

Designed as a tool that benefits both developers and system administrators – and as a result is a critical element in many DevOps (developers + operations) tool chains. For developers, using Docker means that they can focus on writing code without worrying about the system that it will be running on. It also reduces development time by allowing them to leverage the thousands of already available software tools, programs and applications already implemented within Docker containers as a part of their application. For operations staff, Docker gives flexibility and results in resource savings because of the reduced number of VMs and hardware based systems that need to be managed.

Containers and Data Security

While containers brings additional security to applications running in a shared environment, running applications within containers is not an alternative to taking proper security measures. SE Linux and AppArmor security policies for access control are recommended. Be aware though, that a fairly deep level of expertise is required to configure SE Linux polices properly to prevent the risk of "leaking" outside the container via inappropriate access.

When using containers, organizations you need to take into account the possibility of sensitive information being stored inside containers, either deliberately or inadvertently. Even deleted and out of service containers represent risks, as there is still a possibility that they may hold sensitive data that can be retrieved. How can these concerns be addressed intuitively and without worrying about adding complexity? Vormetric Transparent Encryption (VTE) comes to rescue offering Docker protection by “following the data” in the containers and handles the security with encryption and access control. VTE is container aware, and is capable of encrypting the data, whether it is stored on a Docker host, within Docker images or as part of a Docker container. Access control policies can also be applied to the image base or to individual containers. These policies include Docker signing (creation of a digital signature for the Docker application instance authorized to access containers/images) and the signature can be used to provide an additional layer of security by authenticating the exact Docker application instance authorized to use Docker images.

In the next blog post, we’ll cover some use cases and security models for them, as well as show Vormetric can provide additional security in these scenarios.

I-Ching Wang | Senior Director, Engineering

I-Ching Wang | Senior Director, Engineering