This blog post covers our investigation and experimentation with threshold signature schemes, resulting in the construction, testing and integration of a modified threshold RSA signing protocol. The protocol was implemented using a blockchain for communication and verifiability, while also integrated with Adobe Reader (via a PKCS#11 interface) to demonstrate the ‘real-world’ usability of the system.

Situation - Migration of security applications to the cloud poses unique challenges in key management and protection: asymmetric keys which would previously have resided in tamper-resistant, on-premise Hardware Security Modules (HSM) now must either continue to reside in non-cloud HSMs (with attendant communication and integration issues) or must be removed from HSMs and exposed to cloud-based threats beyond an organization’s control, e.g. accidental loss, warranted seizure, theft etc.

Multiparty computation (MPC) techniques offer non-traditional alternatives for addressing these problems - in particular, threshold signature schemes allow ‘signing power’ to be distributed between a number of parties, such that a certain quorum of the parties are required to collaborate to generate valid signatures.

If you are familiar with secret-sharing techniques, this may sound familiar - however, while simple secret sharing schemes split sensitive values between parties, this implies that when the shared value is used, one party must be entrusted with the task of re-assembling the secret value, carrying out an operation and then destroy their reassembled secret. However, if this destruction does not take place, the trusted party is capable of acting unilaterally without the onward permission of the other parties.

Threshold signature schemes go beyond simple secret sharing of signing keys, ensuring that no ‘trusted assembler’ step (as above) takes place - instead the signing parties provide per-signature signing shares - when combined, these form a valid signature but the combiner gains no advantage allowing them to unilaterally sign different messages.

Some threshold signature schemes go even further, ensuring that no ‘trusted generator’ ever exists - the keypair can be generated by the untrusting nodes collaboratively, with the private key never existing at any point during generation or use. These two settings are referred to as ‘trusted dealer’ and ‘no trusted dealer’ schemes, respectively.

With these property, threshold signature schemes appear to offer attractive features for mitigating cloud-based threats:

If signing power is distributed among five servers, with any three required to sign, the schemes offer resilience against:

- Theft of (up to 2) signing key fragments

- OS/CPU compromise of (up to 2) endpoints

- Warranted seizure of (up to 2) endpoints

- Key fragment loss/corruption (up to 2)

In example use cases, a root signing key could be split into five fragments, such that any three of them were sufficient to sign certificates. The key fragments could be distributed over five continents, on five different hardware endpoints. A hostile government could carry out warranted seizure of one or two key fragments but would learn zero information regarding the private key.

If each of the key fragments were protected (by possibly heterogeneous) HSMs, the diversity of the environments/systems would clearly offer greater levels of protection/seizure-resistance than any single HSM could offer.

The number of trials required does not scale well with bitsize (requiring several hundred thousand attempts to 4096-bit RSA).

Given the attractive properties above, why are distributed signing techniques not widely used?

The Complication - Avoiding any trusted points leads to highly-complex protocols In practice, ‘no trusted dealer’ threshold schemes are most attractive, at a stroke doing away with concerns over data leakage within a device and (single) side-channel attacks.

Threshold signature schemes without trusted dealers generally have the following weaknesses in common:

- Relatively-complex key generation protocols (and, to a lesser extent, signing protocols)

- Comparatively poor performance

Complexity of key generation: When compared to non-threshold schemes, generating keys for threshold schemes is a complex business.

The main scheme we used was a threshold RSA scheme - an RSA keypair being generated by a number of nodes such that the private exponent never exists. While normal RSA-1024 generation takes a few milliseconds, dealerless generation of a 1024-bit RSA key takes several minutes and can generate protocol transcripts approaching a gigabyte in size.

The bulk of the protocol consists of a large number of distributed trial computations based on distributively-chosen random numbers, till a working combination is found. Multiple stages of distributed computation are involved, along with multiple rounds of secret sharing and distribution of intermediate values. At each stage, we want to be sure that all parties are acting honestly and not sharing corrupt/manipulated values etc. This requires commitments and verifications to carried out at each stage of the protocol, adding greatly to the time and bandwidth required.

Even if malicious/compromised parties do not try to influence the key generation, all parties need to agree at multiple points on certain values, among them the public RSA modulus, leading to a need for distributed consensus methods.

In the event that a corrupt node did try to influence or corrupt the generated key, being able to unambiguously ‘point the finger’ is a difficult problem in such key generation schemes.

Speed of key generation: Key generation in RSA threshold schemes is highly time-consuming, requiring several minutes in our implementation (for 1024-bit RSA) to hours (for 4096-bit RSA), i.e. definitely not a real-time task.

At the root of this is a repeated trial procedure in which the parties jointly generate candidate RSA modulii (without any party knowing the p/q values), followed by jointly testing the modulii to determine whether they are the product of two primes (and thus usable).

Signing operations, in contrast, are relatively trivial in terms of both complexity and speed, requiring a few milliseconds of CPU time, signature times being dominated by network latency between nodes.

Questions - How to verify execution in the face of protocol complexity and how to improve speed?

Possibly the main reason for the lack of adoption of threshold signature schemes has been the daunting task first of implementing the schemes in a secure manner and secondly, the task of verifying ~1GB worth of protocol transcript to ensure that no party modified values or otherwise influenced the process. This process involves the checking of thousands of commitments, proofs-of-knowledge and the consistency of intermediate values. If these cannot be verified and/or any ambiguity regarding the proper generation exists, trusting that the threshold key has been honestly generated (as opposed to a malicious party simulating the process) becomes very difficult. Likewise, verifying that all parties have observed the same transcript becomes crucial.

In terms of speed, theoretical improvements to the speed of distributed RSA key generation continue, but the state of the art is not far from the scheme we implemented. Improving speed of key generation by parallelisation, ‘batching’ of messages and ‘optimistic execution’ are areas we looked into.

Answer - Put distributed RSA key generation on a blockchain!

Despite “put X on a blockchain” being gratuitous in many cases, using a blockchain underneath distributed RSA generation makes sense from a number of angles:

- Protocol transcripts are recorded and agreed upon

- Consensus on intermediate values is trivial

- Commitments to messages are provided (in part) by transaction signing

- Verification of identities of participants and possibility of using PKI to bootstrap the generation

In a threshold-RSA-on-blockchain scheme, we have an underlying system which provides transcript storage and integrity, consensus on intermediate and signature values, node-to-node private communication channels, resilience and recoverability.

With some help from an acronym generator, we christened our system “BADGER - Blockchain Auditable Distributed key GEneRation".

By writing all key-generation messages to the blockchain, followed by all signatures, we can follow the entire generation and usage of a particular keypair from the moment the original participants began generation.

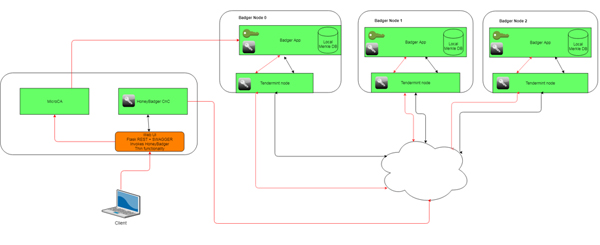

Our developed system uses the Tendermint consensus engine (https://tendermint.com/) and creates one blockchain per key. Tendermint is a consensus engine, providing the lower-level communication and consensus protocols required for blockchain applications.

The diagram gives a high-level overview of a 3-participant key generation blockchain, along with client interfaces.

Three Badger nodes are shown, each containing a tendermint node. The tendermint nodes are in communication with private, public and off-chain channels. Initial configuration of the nodes to be involved in a key generation takes place via a specially-crafted genesis file.

The client interacts with the system via a web user interface with a command-and-control application (HoneyBadger) coordinating the Badger applications.

Integration with Adobe Reader

To test real application usability, our Badger system was integrated with Adobe reader via a PKCS#11 interface handler, allowing Adobe reader to request and validate PKCS#1 signatures from our blockchain.

With the blockchain nodes running in cloud servers, total signing times of around 20 seconds were achievable, with any 4 out of 5 blockchain nodes online.

Key Generation Performance Results

Key generation times are non-deterministic, following a distribution with a long tail. Average key generation times are given below for our system:

RSA bitsizeTypical timesMedian # attempts

10241 min - 5 min~3,500

20485 min - 45 min~40,000

307215 min - 2 hours~125,000

409645 min - 6 hours~500,000

We performed little in the way of implementation optimisation, beyond slight modification of tendermint parameters. The fastest reported threshold key generation results feature nodes connected by 40Gbps interfaces and featuring highly-parallel implementations.

Further reading

A pre-submission paper detailing the actual scheme used along with full technical/theoretical details is available [HERE].