This blog accompanies a talk at DevSecCon London 2018 (slides)

A common development trend over the last few years has been the shift to microservice architectures over more traditional monolithic approaches. A non-exhaustive list of why this has occurred is:

- Reusability: a microservice can support multiple projects which have a similar need and can be deployed dynamically.

- Change-ability: a microservice presents a network API. Changing the implementation of a microservice need not impact on its consumers in the same way as linking it as a code dependency.

- Consume-ability: microservices can be consumed easily on a range of platforms whether PC, mobile, cloud or even mainframe.

- Incremental delivery: the failure to deliver a small piece of functionality need not impact the rest of a system unnecessarily.

- Enabling team autonomy: multiple autonomous teams can co-build a solution whilst remaining largely independent. System implementation becomes more scalable.

- Compartmentalized attack surface: the exploitation of a single microservice does not mean entire system compromise.

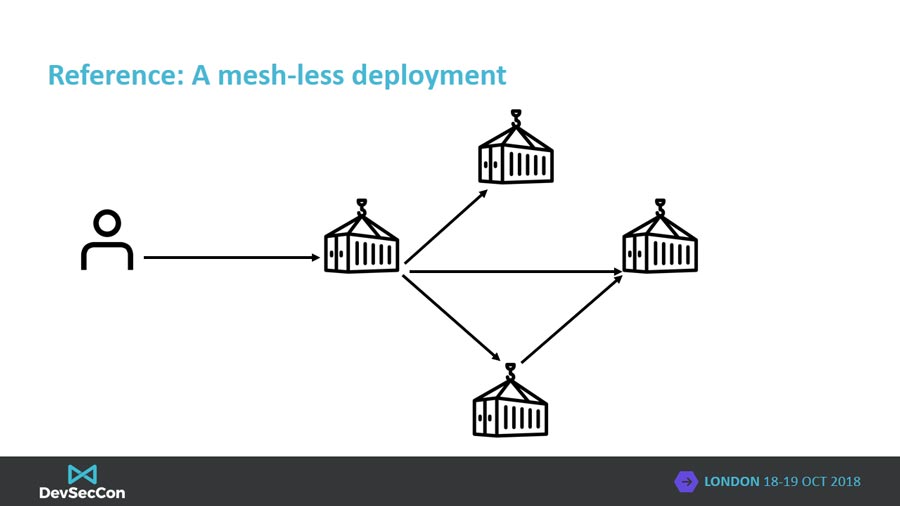

The above reasons largely point at reasons outside of the security domain as to why microservices would be chosen as a way to build solutions and they’re often for operational reasons. From a security standpoint microservices cause some less-than-ideal consequences:

- Holistic system understanding is undermined: as more autonomous teams work to deliver a system, the understanding can be diluted. As a result it can become harder to reason about the security of a system.

- Immature tooling: monolithic tools have a wealth of tooling including static and dynamic analyzers that can be invoked at build time. In comparison microservice tools tend towards active monitoring.

- Autonomous teams do things differently: different tools and processes are used by teams increasing the surface area for attack as well as making rigorous review more difficult.

Facebook’s recent “View As” exploit is an example of a complex system that was exploited by vulnerabilities between interacting services. The exploit itself was complex and difficult to mitigate due to some of the reasons listed. However there are a number of tools, patterns and techniques emerging that can be applied to help with these situations.

It is important to understand that with microservices the same core tenets of security; authentication, authorization and accounting; still apply and that doing these well will mitigate most common attacks. How and where these concepts can be applied is the subject of the rest of this blog post.

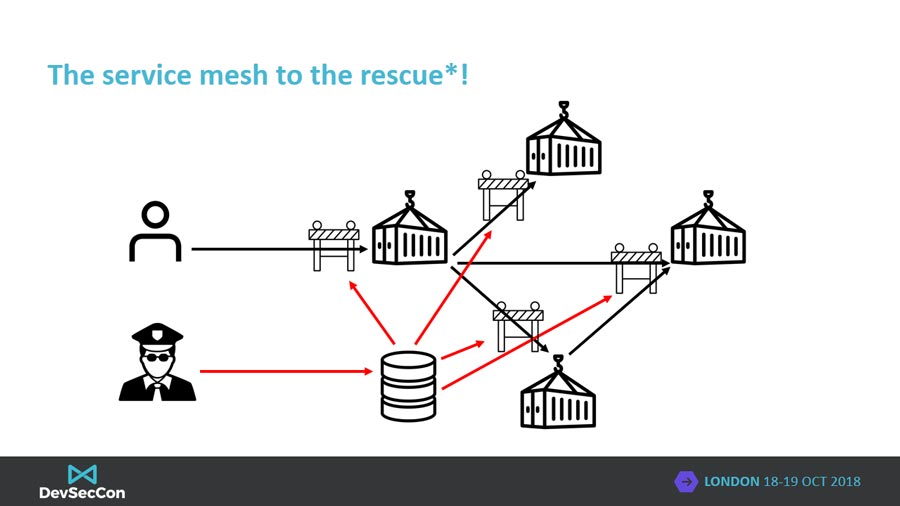

Spoiler alert! The answer is a service mesh. A service mesh is a network-level function that can be programmed to provide many application-layer capabilities. Communication between services is transparently intercepted (OSI Layer 7) by sentries at the entrypoint of each microservice, sentries enforce defined policies. Generally service meshes follow the classic Software-Defined Networking (SDN) split of a management and data planes. The management plane provides the capability to program and administer the mesh, distributing configuration to the data plane components. The data plane, implemented with the sentry or sidecar, intercepts all network activity and enforces policy. More later.

Authentication

Authentication: The act of uniquely validating the identity of a user or service.

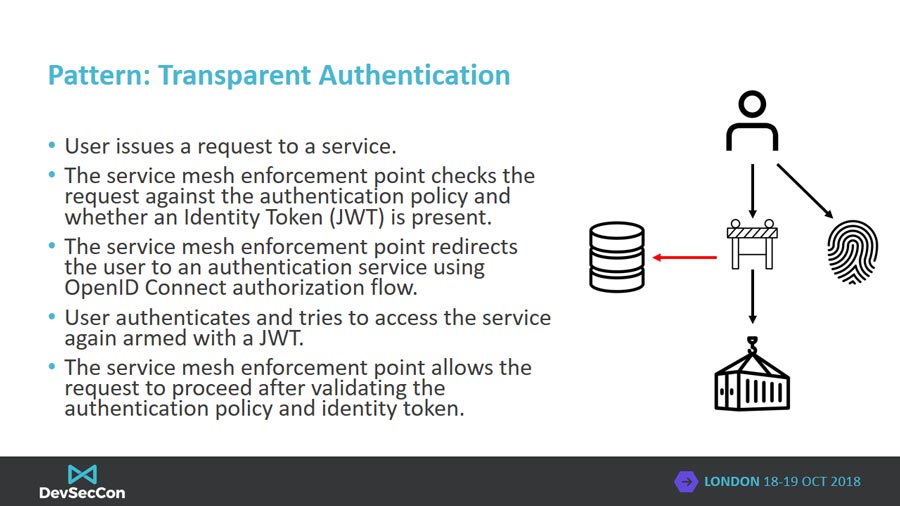

Authenticating system actors is core to enabling robust access control of resources exposed by a microservice API. Simplistically we can split authentication into 2 broad categories; human and service authentication. Humans authenticate using unique identifiers such as email addresses and passwords and, ideally, users also use a second factor too such as a security token. In comparison, services and applications use unique identifiers and either pre-shared secrets, bearer tokens or asymmetric cryptography to authenticate themselves to a system. Often in the microservice domain, humans participate in protocols such as SAML or OpenID Connect whilst services use a OAuth2 confidential client flow. No matter the authentication protocol, the result is usually the generation of a cryptographically signed token generated by the authenticator which can be consumed by a microservice to verify a user or service’s identity.

A service mesh can be configured to transparently authenticate users in a consistent manner across a microservice deployment. By intercepting application-layer communication, the service mesh can check for the presence of identity tokens and force the user to authenticate if one is not present.

Authorization

Authorization: the act of deciding whether an operation can be performed.

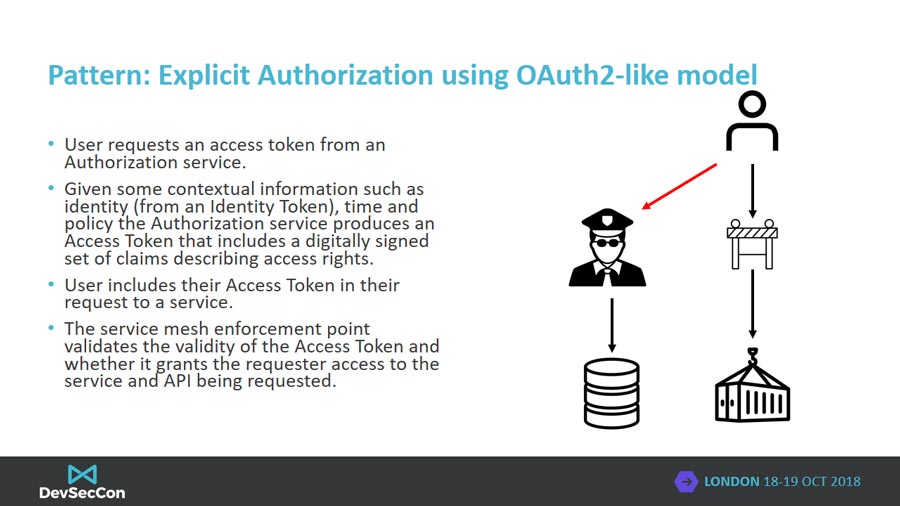

Given authentication is the act of identifying an actor, authorization builds upon that knowledge asserting whether a given actor can perform microservice API calls. There are 2 variants of authorization; explicit and Just-in-Time.

Explicit authorization relies on the actor requesting a cryptographic token which asserts their right to perform certain operations. The authorization token service uses the actor’s identity, the scope of requested access and other contextual information (time of day, IP address, etc.) to decide whether the actor can perform operations. The authorization token service includes claims about the scope of access in the cryptographically signed token they return to the actor. The actor then includes their authorization token in API requests which the service mesh can intercept, validate is signed by a trusted authority, and verify the claims contained in the token match the authorization policy that allows an operation to be performed.

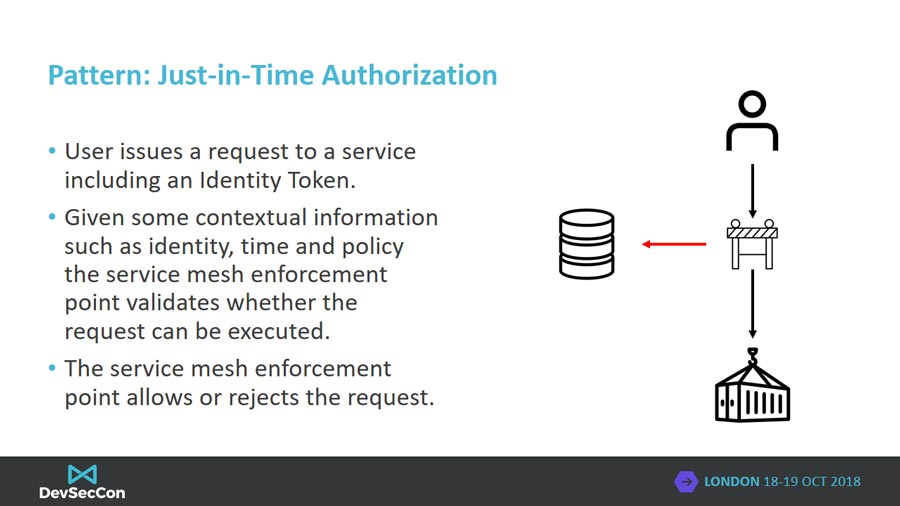

Just-in-Time authorization implements authorization decisions inline of requests based on the identity of the caller and other contextual information. The caller is not required to request a scoped access token instead the scope of access is identifier in the context of the API call. A service mesh may implement this pattern of authorization.

The 2 models of authorization presented are both valid approaches but the choice of which will come down to deployment scenario. Explicit authorization lends itself to a separate authorization service where the scope of access of a caller is not likely to change often. A good real world example is configuring github.com to authorize access to a continuous integration service so that changes to a private repository can be monitored. JiT authorization makes more sense when the authorization and microservices are all controlled by the same entity or when authorization policy is likely to change often or the scope of access unknown. A good analogy would be a user interacting with an AWS web console. The scope of access the user requires for their console session is unknown upfront so decisions should be made Just-in-Time.

Accounting

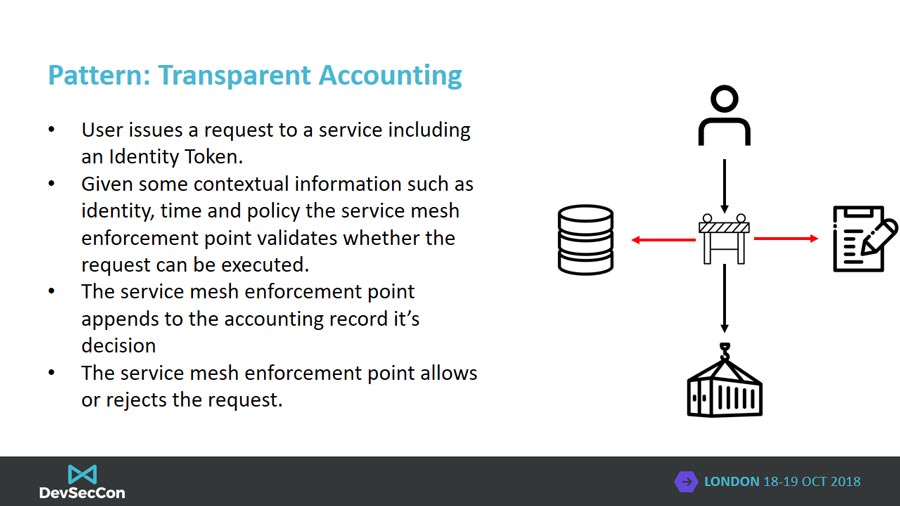

Accounting (audit): the measurement of what happened and why

Accounting covers the capture of events that have happened. Examples of accounting records could be successful user authentication, authorization failures or the number of requests to a single API request by a user. The importance of accounting is often lost as it is generally uninteresting for most sunny day system scenarios. The value of robust accounting largely comes when trying to understand the scope of compromise of a system, understanding the nature of a vulnerability or potentially for use in a feedback loop for making authorization decisions. In addition auditors and regulators will favour organizations with robust accounting.

A service mesh can be configured to perform automatic accounting of network API requests. Upon request the service mesh can be configured to create an audit record and include information such as the APi being called, the resource being operated upon, the identity of the requestor, the time of day and whether the request succeeded or not. By generating audit records at the API request level, we’re able to generate consistent records no matter what underlying technology may be implementing a microservice.

Policy-as-Code

One of the most significant features of a service mesh is the ability to configure them using a Policy-as-Code or Security-as-Code pattern. Policy-as-Code is the definition of service mesh enforcement policies in structured human-readable formats that are also machine-parsable. The value is thus:

- Policy can be treated like any other source code and can be managed with the same tools. Policy for a microservice can reside in the same repository as the source code and can be reviewed in the same way.

- Automatic documentation of the security enforcement without resorting to external tools such as text documents thus making it easier to acquire a holistic view of a system.

- Improved observability and validation and thus reasoning. Using a structured Domain Specific Language (DSL) enables static and dynamic analysis tools to be written that can reason about policy before and during deployment. In addition the flow of authorized requests through a system can be calculated and visualized before deployment highlighting potential “View As” vulnerabilities before they are exploited.

There are many excellent examples of Policy-as-Code that be found at https://istio.io.

Conclusion

The move towards microservices is throwing up many security challenges which we cannot address with older style tooling. Maintaining a holistic view of a system is important from a security perspective and we must use tools to make that both simple as well as native for development teams so to enable greater autonomy. By creating tools that teams can adopt to automatically enforce and document their security policies, it allows security to become a first class citizen in modern development and implemented earlier in the development lifecycle. In other words, we need new tools to allow security to “shift left”.